Arctop and the Intelligence Layer for Brain Data: Dan Furman on Decoding the Mind

Arctop CEO Dan Furman on why the future of neurotechnology may depend less on new hardware and more on the software that can finally make brain signals useful.

Interview by Chay Carter, founder of Carter Sciences and Reccy Neuro.

Dan Furman is CEO of Arctop

When people think about brain-computer interfaces, they often think first about hardware: implants, headsets, sensors, and futuristic devices. Dan Furman thinks the deeper opportunity sits elsewhere.

As Co-Founder and CEO of Arctop, Furman has spent years focused on the intelligence layer between brain-sensing hardware and the applications built on top of it. His view is that the core bottleneck in neurotechnology is not simply capturing signals, but decoding them well enough, fast enough, and personally enough to make them useful in the real world.

In this conversation, he reflects on the operating-room moment that first pulled him into neuroscience, why Arctop made an explicit bet on software rather than hardware, what the company believes it can see in brain data that others still miss, and how he thinks cognition-aware computing could reshape health, education, entertainment, and human-computer interaction more broadly.

“The human brain is constantly talking. Arctop built an ear for it.”

What originally drew you into the brain, and how did that eventually lead to founding Arctop?

It started in a surgical theatre in Newport Beach, California, when I was 16. I was a research intern under neurosurgeon Dr. Christopher Duma, watching him perform an open-brain procedure on a Parkinson’s patient. Over Dr. Duma’s shoulder, I saw the patient’s brain shimmering under the operating-room lights as the patient was gently awakened from anesthesia and spoke with the surgical team while they located the right place to implant a stimulating electrode.

The brain itself has no pain receptors, so the patient was speaking comfortably while Dr. Duma adjusted the electrode’s electrical output. As he turned the stimulation up and down, the patient’s Parkinsonian tremors got worse, then better, then completely disappeared. That was the moment I understood, viscerally, that the brain is electric. That thought never left me.

I went on to study neurobiology at Harvard. While I was there, the first iPhone came out, and I had this feeling that the intimacy between biological and computational electrical signals was going to define the coming decades. I moved away from the pre-med track, with the intention of following in Dr. Duma’s footsteps, and deeper into advanced technology.

After graduating in 2011, I joined a small medtech startup in San Diego working in sleep technology. Within months, we were adapting the company’s at-home sleep brain-monitoring hardware to serve as a communication device for Stephen Hawking, whose ALS had progressed to the point where even his cheek-click controller was failing him. He did not want to be implanted with anything, so that ruled out the most capable neural-interface systems at the time, and we were chosen as the outside team to try to give him a non-invasive way to continue communicating.

We made meaningful progress, but the technology ultimately never reached the people who most needed it. That gap — between what science could do and what anyone could actually access — became the defining frustration of my early career.

So I went back into research. I did my PhD at the Technion’s Evoked Potentials Laboratory in Israel and, with Professor Hillel Pratt and collaborators, we achieved something that had not been done before: We used EEG data to decode imagined finger movements accurately, not perfectly, but far beyond chance. That level of granularity had been considered essentially impossible because of how noisy EEG signals are. What it proved to me was that scalp-measured brain signals carry far more information than the field had assumed, and that the real scaling challenge was not the brain’s inaccessibility but the unsolved problem of accurate brain-decoding software. That realization led directly to founding Arctop in the spring of 2016.

For those coming across Arctop for the first time, how do you describe what the company is building today?

At the highest level, Arctop is building the software layer that can listen to the brain in real time and translate those signals into something useful.

The simplest true thing in neuroscience is that every thought you have, every moment of focus, boredom, stress, or excitement, produces tiny electrical signals. The brain is constantly talking. For decades, scientists could record that signal in hospitals and labs with expensive hardware, but it was slow, specialist-driven, and mostly unusable for everyday life.

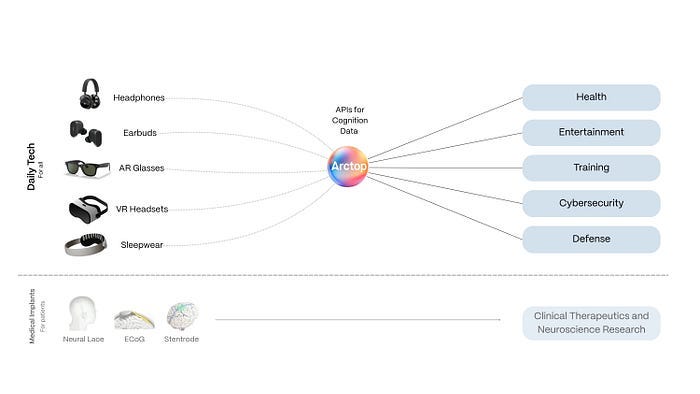

At Arctop, we asked a different question: What if software could listen to that signal in real time through low-cost sensors embedded in headphones, earbuds, glasses, or other head-worn devices, and actually understand what it means? That is the whole company. Our software sits between a wearable device and an app, and translates brain signals into something useful: Is this person focused, bored, stressed, learning, or confused?

I often use this analogy: A thermometer does not understand fever, it just reads temperature. A doctor understands what that temperature means. Arctop is trying to be the doctor for brain electricity, but running in software, in real time.

Arctop aims to leverage multi-source neural data for a range of applications